SmartAPM framework for adaptive power management in wearable devices using deep reinforcement learning

Dataset and data pre-processing

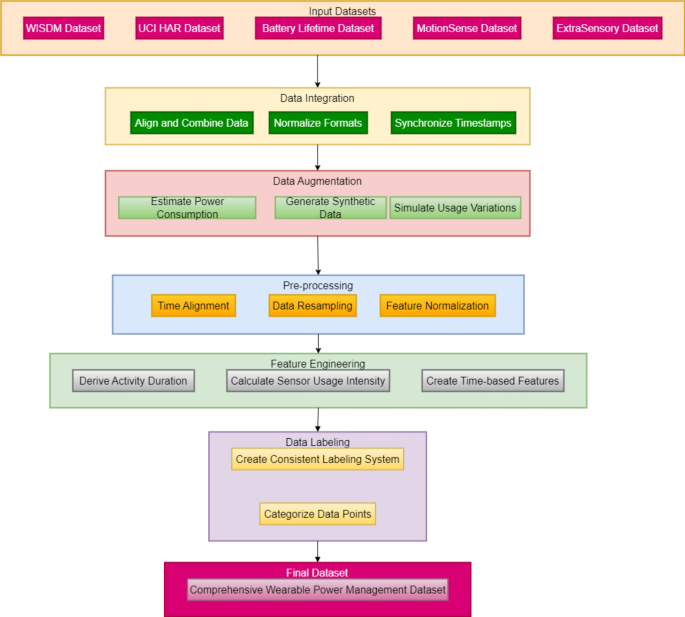

In this study, we developed a custom dataset for adaptive power management in wearable devices by combining several publicly available datasets and augmenting them with synthetic power consumption data. The datasets used include the WISDM Dataset15, the UCI Machine Learning Repository’s Human Activity Recognition dataset17, the Battery Lifetime Dataset18, the MotionSense Dataset15, and the ExtraSensory Dataset19.

To ensure the dataset’s suitability for training machine learning models and intense reinforcement learning (DRL) algorithms, we applied a series of preprocessing steps aimed at improving data quality, addressing missing or inconsistent values, and preparing the data for feature extraction. Below, we describe each preprocessing stage in detail. We augmented this data with synthetic power consumption profiles based on device specifications and typical usage patterns. The preprocessing stage involved time alignment, resampling, and normalisation to ensure consistency across all features. Figure 3 presents the combination of datasets for the present study.

Combination of Datasets for the present study.

Data integration and synchronization

The first phase of preprocessing involved consolidating data from various sources. Each dataset had varying temporal resolutions and feature types, so we used time alignment to synchronize the data across all features. This was critical for creating a cohesive dataset with consistent temporal dimensions. We resampled data from multiple sources to ensure timestamp synchronization and consistent representation of all features at regular intervals. This ensured that the comparisons between data points were significant23,24,25.

Handling missing values

Missing values frequently pose a challenge when analyzing real-world datasets. We employed mean imputation to substitute missing values for numerical attributes. This method was selected as it maintains the overall distribution of the data while addressing the absence of unavailable data. For categorical features, such as user activity or device state, we employed the mode imputation strategy, substituting missing values with the most prevalent value for that feature24,26.

Outlier detection and removal

Outliers have a chance to distort the relationships between variables and reduce the effectiveness of machine learning algorithms. Z-score analysis was utilized to detect outliers within the dataset, concentrating on numerical variables including accelerometer readings, power consumption, and CPU utilization. Data points with Z-scores greater than 3 or less than − 3 were classified as outliers. These outliers were either capped at the maximum or minimum allowable values or removed based on the distribution of the feature12, 17.

Handling class imbalance

Due to the diverse user activities and varying power consumption patterns, our dataset encountered potential class imbalance challenges. For example, user activities such as “sleeping” may exhibit a significantly higher number of samples compared to activities like “exercising” or “working.” To address this issue, we employed the Synthetic Minority Over-sampling Technique (SMOTE), which creates synthetic samples for underrepresented classes through interpolation of existing samples. This method facilitated dataset balance and enhanced the model’s capacity to learn from infrequent patterns4,5,6,8,9.

Feature engineering

Feature engineering is essential for enhancing the predictive capabilities of machine learning models. Our approach emphasized the extraction of features from sensor data, user activity, and power consumption4,5,6,9. The feature extraction process comprised three primary categories:

-

1.

Temporal Features: Temporal patterns in sensor data were analyzed through rolling statistics, including mean, variance, and standard deviation, across different time windows. Fast Fourier Transform (FFT) was employed to analyze the frequency-domain characteristics of the accelerometer and gyroscope data.

-

2.

Contextual Features: Contextual information was obtained from the user’s location utilizing GPS-based clustering, alongside activity recognition implemented via a pre-trained Convolutional Neural Network (CNN) that processes accelerometer and gyroscope data. Additionally, device state indicators, including screen status, Wi-Fi connectivity, and battery level, were considered. The features offered insights regarding the context and environment of utilization24,25,26,27.

-

3.

Power Consumption Features: Power-related features were extracted, encompassing component-wise power consumption (such as CPU and screen brightness), cumulative energy usage across various time intervals, and transitions between power states. The features identified were essential for accurately modeling the energy behavior of the wearable device.

Feature selection

Subsequent to the extraction of the initial feature set, the data pre-processing phase implemented a multi-phase feature selection procedure to diminish dimensionality and preserve solely the most salient features:

-

Correlation-Based Feature Selection (CFS): This method was employed to remove highly correlated features, thereby diminishing multicollinearity and enhancing model efficiency.

-

Sequential Forward Selection (SFS): This approach facilitated the identification of the most pertinent subset of features by incrementally adding one feature at a time and assessing its impact on the model’s performance.

-

Principal Component Analysis (PCA): PCA was utilized to diminish the dimensionality of the feature set further while retaining 95% of the variance. This guaranteed the retention of essential information without adding unnecessary complexity.

Data normalization

Min-max normalization was applied to the dataset to ensure that all features were on a comparable scale. This scaling technique modifies all numerical features to ensure they fall within a range of 0 to 1. This adjustment is particularly crucial for machine learning algorithms that exhibit sensitivity to feature scale, including deep learning models.

Synthetic power consumption profiles

To improve the dataset and model realistic power consumption scenarios, we created synthetic power consumption profiles derived from established device specifications (e.g., battery capacity, processor type, screen size) and common usage patterns (e.g., idle, active, screen on/off). The synthetic profiles significantly improved the model’s generalization across various usage scenarios, although it is recognized that these synthetic data points may not accurately represent real-world conditions24, 26.

Final dataset

These preprocessing procedures prepared the dataset for model training. The system encompasses various features, including time-based, contextual, and power consumption metrics, derived from multiple users and devices. The final dataset comprises both raw sensor data and engineered features that encapsulate intricate interactions between user behavior and power consumption patterns.

This study’s preprocessing techniques enhanced the quality of the dataset; however, we recognize the presence of certain limitations. Synthetic data points, especially power consumption profiles, may not comprehensively represent all real-world variables, including environmental factors and hardware variations. The dataset’s comprehensive and diverse characteristics establish a robust basis for the development of adaptive power management strategies through deep learning techniques. Table 3 presents the dataset sample.

The samples from the dataset shown in Table 3 represents a snapshot of wearable device usage for a specific user across different times and activities. Each column captures a moment in time, providing a comprehensive view of the device’s state and the user’s context. The data includes temporal information (timestamp, time since last charge), user activity and location, device status (battery level, CPU usage, screen brightness, Wi-Fi state), sensor readings (accelerometer, gyroscope, ambient light, temperature), and power-related metrics (consumption rate). The Pattern ID field indicates that the system has recognized specific usage patterns for each sample.

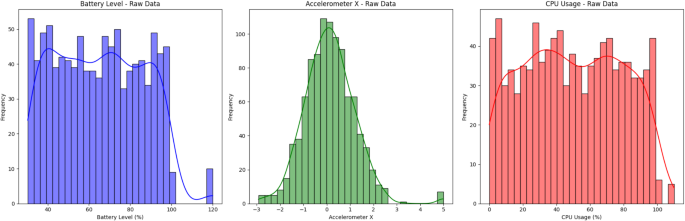

Histograms illustrates the raw feature distributions, including outliers in key features like Battery Level, Accelerometer X, and CPU Usage.

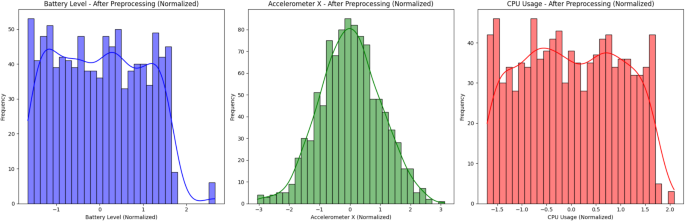

Histograms shows the distributions after preprocessing, including outliers in key features like Battery Level, Accelerometer X, and CPU Usage.

Figure 4 displays histograms that depict the raw distributions of essential features, including Battery Level, Accelerometer X, and CPU Usage. The raw distributions indicate the existence of outliers, which may impact the model’s performance by introducing noise and distorting the results. Figure 5 illustrates the distributions of these features subsequent to preprocessing. The preprocessing steps, including outlier removal and data normalisation, have produced cleaner distributions. This transformation enhances data consistency, mitigates the influence of extreme values, and improves suitability for deep learning model input. The figures illustrate the enhancement of dataset quality and reliability through preprocessing.

The proposed SmartAPM method

The proposed adaptive power management system for wearable devices employs a multi-stage approach to optimize energy consumption while maintaining user experience. Our methodology encompasses several key components: feature extraction, reinforcement learning, prediction of future power needs, decision-making for power management strategies, and execution of power-saving measures. These components create a robust, user-adaptive system capable of real-time power optimization. In this section, we detail each component, beginning with the critical feature extraction process, which forms the foundation of our adaptive approach.

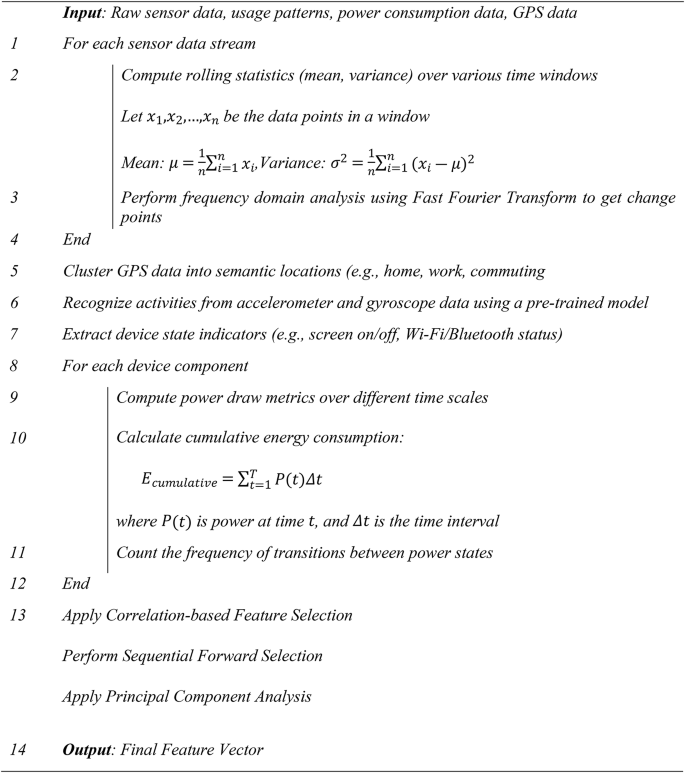

Feature extraction

Feature extraction transforms raw sensor data and usage patterns into informative and non-redundant features, facilitating subsequent learning and generalization steps. The feature extraction phase is crucial for dimensionality reduction and for creating a robust feature space that captures the salient characteristics of user behaviour and device states relevant to power consumption24,27. The steps in feature extraction are tabulated in Algorithm 1.

Feature extraction process.

Temporal features are extracted to capture the time-dependent aspects of device usage and power consumption. These include:

-

1.

Time-series statistical measures: For each sensor stream, we compute rolling means, variances, and higher-order moments over various time windows (e.g., 1-minute, 5-minute, and 15-minute intervals). This approach captures short-term fluctuations and longer-term trends in device usage.

-

2.

Frequency-domain features: Fast Fourier Transform (FFT) is applied to sensor data streams to extract frequency components, providing insights into periodic behaviours in device usage and user activities.

-

3.

Temporal pattern indicators: We implement change point detection algorithms to identify significant shifts in sensor readings or power consumption patterns, which may indicate transitions between user activities or device states.

Contextual features are derived to encapsulate the environmental and situational factors influencing power consumption:

-

1.

Location-based features: We discretize GPS data into semantic locations (e.g., home, work, commuting) using clustering algorithms supplemented by time-of-day information to capture location-dependent usage patterns.

-

2.

Activity recognition features: Employing a pre-trained convolutional neural network (CNN), we extract high-level activity recognition features from accelerometer and gyroscope data, categorizing user states (e.g., stationary, walking, running).

-

3.

Device state indicators: Binary and categorical features are generated to represent various device states, such as screen on/off, Wi-Fi/Bluetooth connectivity, and running applications.

We derive a set of features specifically tailored to characterize power consumption:

-

1.

Component-wise power metrics: Utilizing the available power consumption data, we extract features representing the power draw of individual device components (e.g., display, CPU, sensors) over different time scales.

-

2.

Cumulative energy consumption: We compute cumulative energy consumption features over various time horizons (e.g., past hour, day) to capture longer-term power usage trends.

-

3.

Power state transition frequencies: Features are extracted to represent the frequency of transitions between different power states, providing insights into the stability of power consumption patterns.

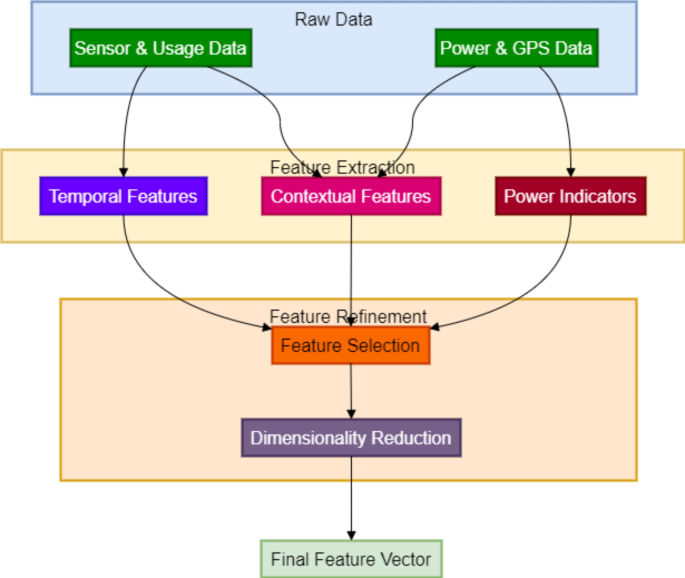

Steps in Feature Selection.

To mitigate the curse of dimensionality and enhance model performance, we employ a two-stage feature selection process as presented in Fig. 6.

-

1.

Filter methods: We apply correlation-based feature selection (CFS) to remove highly correlated features, reducing multicollinearity in the feature set.

-

2.

Wrapper methods: Our deep learning model utilizes sequential forward selection (SFS) to identify the most informative feature subset, optimizing for predictive performance and computational efficiency.

Furthermore, we apply Principal Component Analysis (PCA)20 to the selected feature set, retaining components that explain 95% of the variance. This step ensures a compact yet informative representation of the input space for subsequent modeling stages. The steps in feature selection are illustrated in Fig. 6. As explained in the next section, the resulting feature vector is used to train the reinforcement learning networks.

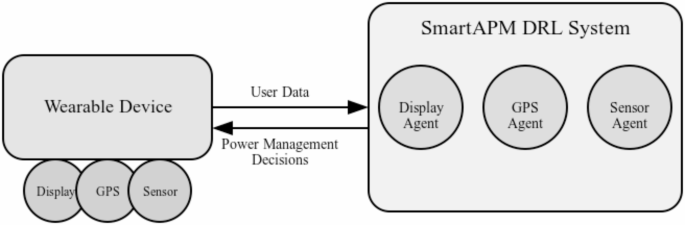

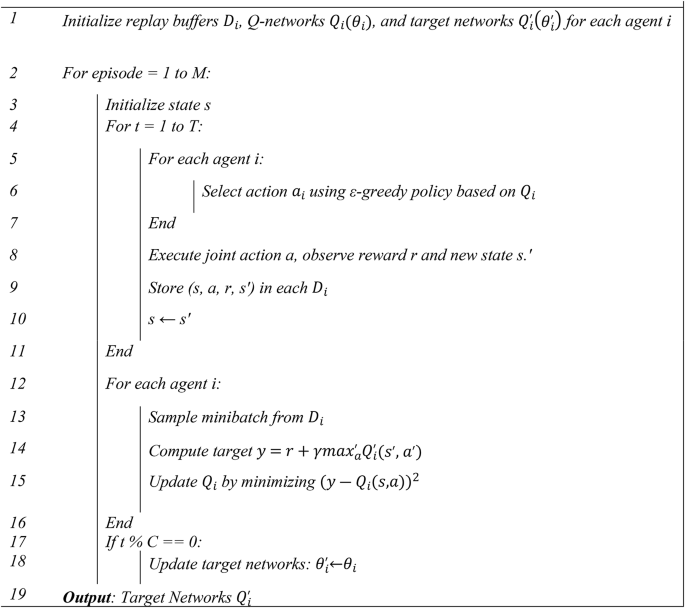

Reinforcement learning in SmartAPM

After the feature extraction process, SmartAPM employs a sophisticated multi-agent deep reinforcement learning (DRL) system to optimize power management in wearable devices.

-

SmartAPM utilizes a multi-agent DRL system, where each agent manages a specific device component (e.g., display, CPU, sensors, network interfaces). This decentralized approach allows for fine-grained control and adaptability.

-

The state space \(\:S\) for each agent includes Component-specific features from the feature extraction step, the component’s Current power consumption, the Remaining battery life, and the Time since the last charging.

-

The action space \(\:A\) for each agent includes adjusting component-specific parameters (e.g., screen brightness, CPU frequency) and turning certain functionalities on/off.

-

The reward function \(\:R\) is designed to balance power savings with user experience as defined by Eq. 1.

$$\:R={w}_{1}\cdot\:\text{PowerSavings}+{w}_{2}\cdot\:\text{UserSatisfaction}-{w}_{3}\cdot\:\text{ActionPenalty}$$

(1)

Where \(\:PowerSavings\) is the reduction in power consumption compared to a baseline, \(\:UserSatisfaction\) is derived from user interaction metrics and feedback, \(\:ActionPenalty\) discourages frequent changes to promote stability, \(\:{and\:w}_{1}\), \(\:{w}_{2}\), \(\:{and\:w}_{3}\) are weights that can be tuned.

Each agent employs a Deep Q-Network21 to learn the optimal policy. The DQN architecture consists of an Input layer with dimensionality matching the state space, multiple fully connected hidden layers with ReLU activation, and an output layer with dimensionality matching the action space.

The Q-value update rule follows by Eq. 2.

$$\:Q\left({s}_{t},{\:a}_{t}\right)\leftarrow\:Q\left({s}_{t},{a}_{t}\right)+\alpha\:\left[{r}_{t}+\gamma\:\underset{{a}^{{\prime\:}}}{\text{max}}Q\left({s}_{t+1},{a}^{{\prime\:}}\right)-Q\left({s}_{t},{\:a}_{t}\right)\right]$$

(2)

Where \(\:{\upalpha\:}\) is the learning rate, \(\:{\upgamma\:}\) is the discount factor, and \(\:{r}_{t}\) is the reward at time \(\:t\).

We implement experience replay to improve stability and reduce correlations in the observation sequence. Each agent maintains a replay buffer \(\:D\) of fixed size. The DQN is updated by sampling mini-batches from this buffer.

A separate target network generates target Q-values, which are updated periodically to stabilize training.

To ensure coherent device-wide power management, we implement a coordination mechanism:

-

1.

Each agent computes its Q-values independently.

-

2.

A central coordinator aggregates these Q-values.

-

3.

The coordinator applies a joint action selection strategy.

The joint action selection uses a SoftMax function to balance exploration and exploitation by Eq. 3.

$$\:P\left({a}_{i}|s\right)=\frac{\text{exp}\left(Q\left(s,{a}_{i}\right)/\tau\:\right)}{{\sum\:}_{j}\text{exp}\left(Q\left(s,{a}_{j}\right)/\tau\:\right)}$$

(3)

Where \(\:{\uptau\:}\) is a temperature parameter controlling exploration.

The training process for SmartAPM multi-agent DRL system is outlined in the Algorithm 2.

We implement an adaptive learning rate mechanism to enhance the system’s ability to adapt to changing user behaviours and device conditions. The learning rate $\alpha$ is adjusted based on the temporal difference error by Eq. 4.

$$\:{\alpha\:}_{t}={\alpha\:}_{0}\cdot\:\frac{1}{1+\beta\:\cdot\:\left|{\delta\:}_{t}\right|}$$

(4)

Where \(\:{{\upalpha\:}}_{0}\) is the initial learning rate, \(\:{\upbeta\:}\) is a scaling factor, and \(\:{{\updelta\:}}_{t}={r}_{t}+{\upgamma\:}\underset{{a}^{{\prime\:}}}{\text{max}}Q\left({s}_{t+1},{a}^{{\prime\:}}\right)-Q\left({s}_{t},{a}_{t}\right)\) is the temporal difference error.

This adaptive learning rate allows the system to learn quickly when encountering new patterns while maintaining stability in familiar situations.

To quickly adapt to individual users, SmartAPM employs transfer learning. A base model is pre-trained on a diverse dataset of user behaviours. This model is then fine-tuned for each user, allowing for rapid personalization while retaining general power management strategies. The transfer learning process involves:

-

1.

Freezing the lower layers of the DQN.

-

2.

Replacing the output layer with a new layer initialized for the user’s action space.

-

3.

Fine-tuning the new layer and the last few hidden layers on user-specific data.

This approach enables SmartAPM to achieve personalized power management within 24 h of use, as mentioned in the abstract. By leveraging this sophisticated multi-agent DRL framework, SmartAPM can make intelligent, adaptive decisions about power management in real-time, significantly extending battery life while maintaining a high-quality user experience.

Tuning of weights (α, β, γ) for power savings, user satisfaction, and stability

Within the SmartAPM framework, the reward function is crucial for reconciling various objectives, including energy conservation, user contentment, and system stability. The reward function R is defined by Eq. 5.

$$\:R={[W}_{1}\times\:Powe{r}_{saving}+{W}_{2}\times\:Use{r}_{Satisfaction}+{W}_{3}\times\:Actio{n}_{penalty}]$$

(5)

Here: \(\:Powe{r}_{saving}\): The decrease in energy consumption relative to a baseline. UserSatisfaction: Encapsulates the calibre of user experience, generally derived from user interactions, device performance metrics, and feedback, Actionpenalty: Excessive or superfluous modifications to system parameters to ensure stability.

The weights \(\:{W}_{1}\), \(\:{W}_{2}\) and \(\:{W}_{3}\) (designated as α, β, γ, respectively) are essential parameters that mediate the trade-off among these three objectives. The adaptive modulation of these weights guarantees that SmartAPM can efficiently regulate power consumption while preserving elevated user satisfaction and system stability. The subsequent sections outline the procedure for adjusting these weights to accommodate the varied requirements of different devices and users.

-

\(\:{\alpha\:(W}_{1})\) Weight for Power Savings: It determines the significance assigned by the system to minimising power consumption. An elevated α value emphasises energy efficiency, essential for extending battery longevity in wearable devices.

-

\(\:{\beta\:(W}_{2})\) Weight for User Satisfaction: It signifies the importance placed on ensuring a satisfactory user experience. An elevated β value guarantees that any compromises made for power conservation do not substantially impair the user experience. This is especially crucial in wearable devices, where user satisfaction significantly influences adoption and sustained usage.

-

\(\:{{\rm\:Y}(W}_{3})\) Weight for Action Penalty: It regulates the extent of the penalty imposed for frequent modifications to the system. An elevated value of γ imposes penalties on excessive adjustments, thereby fostering a more stable and consistent system behaviour. This weight assists in preventing the system from implementing superfluous or disruptive alterations that may adversely affect the user experience or the device’s stability.

Tuning process for weights (α, β, γ)

The adaptive adjustment of these weights is crucial for the SmartAPM system to efficiently reconcile power management with user experience across various devices and usage contexts. The subsequent procedure delineates the adjustment of the weights α, β, γ.

-

Initial Tuning: Weights are established according to the device’s specifications (battery capacity, hardware, usage patterns). Devices equipped with larger batteries emphasise power conservation (α), whereas devices necessitating increased interaction concentrate on user satisfaction (β).

-

User-Centric Personalisation: SmartAPM progressively acquires user preferences via transfer learning. If a user frequently requires high performance (e.g., fitness applications), user satisfaction (β) is prioritised.

-

Contextual Adjustments: The system dynamically modifies weights based on device utilisation. For example, in low battery mode, it prioritises power conservation (α), while during demanding tasks, it emphasises user satisfaction (β).

-

Ongoing Feedback: The system collects instantaneous feedback from users to modify weights. In the event of user dissatisfaction, the system prioritises user satisfaction (β); conversely, if battery life is an issue, it transitions to power conservation (α).

-

Reinforcement Learning: SmartAPM employs its Deep Reinforcement Learning system to enhance weight distribution over time, thereby optimising the balance among energy conservation, user satisfaction, and stability.

Hybrid learning paradigm

SmartAPM employs a hybrid approach, combining on-device and cloud-based learning to optimize performance and adaptability. On-device learning handles immediate adaptations and privacy-sensitive data, utilizing lightweight versions of DRL models to focus on short-term pattern recognition and quick responses. Complementing this, cloud-based learning performs complex computations and long-term pattern analysis, aggregating anonymized data from multiple users to improve global models. This cloud-based component typically executes during device idle times or when connected to Wi-Fi to minimize data usage. The system dynamically balances these two paradigms based on available device resources, network connectivity, privacy settings, and task complexity, ensuring optimal performance across various usage scenarios.

Transfer learning for personalization

To achieve rapid user-specific adaptation, SmartAPM leverages transfer learning techniques. The process begins with a base model pre-trained on a diverse dataset of user behaviors, capturing general power management strategies. Upon deployment to a specific user’s device, this base model undergoes fine-tuning. The lower layers of the deep neural network are frozen to retain general knowledge, while the upper layers are retrained with user-specific data. This approach allows SmartAPM to quickly adapt to individual usage patterns while maintaining a foundation of effective general strategies. The model continues to adapt based on new user data, employing a sliding window approach to prioritize recent behaviours while retaining valuable long-term patterns. This continuous adaptation mechanism enables SmartAPM to adjust to new usage patterns within 24 h, as highlighted in the system’s key features.

Computational efficiency optimization

To maintain a low computational overhead, specifically less than 5% of device resources, SmartAPM utilizes a suite of optimization techniques. Model compression methods, including pruning unnecessary connections in the DNN, quantizing weights to lower-precision representations, and knowledge distillation, are applied to reduce the model’s computational footprint. The system also implements adaptive computation, adjusting the frequency and complexity of model updates based on the rate of change in user behaviour. This allows for simpler models during stable periods and more complex ones when behaviours rapidly change. Efficient data handling is achieved through circular buffers for storing recent experiences and incremental learning techniques that update models without full retraining. When available, SmartAPM leverages on-device AI accelerators and optimizes computations for specific hardware architectures, further enhancing its efficiency on resource-constrained wearable devices.

User satisfaction integration

SmartAPM strongly emphasizes user satisfaction, integrating it deeply into its power management strategy. The system monitors implicit feedback through user interactions, such as manual brightness adjustments, app usage patterns, and tracking device usage duration and frequency. Explicit feedback is gathered through periodic micro-surveys and optional detailed feedback forms. This wealth of user data feeds into a sophisticated user satisfaction model, which is then incorporated into the reward function of the DRL system.

SmartAPM adjusts the weights in this reward function to maintain an optimal balance between power savings and user satisfaction. A “frustration detection” mechanism is also implemented, allowing the system to correct any unsatisfactory power management decisions quickly. This comprehensive approach to user satisfaction helps explain the 25% increase in user satisfaction scores mentioned in the paper’s abstract. By integrating these components, SmartAPM achieves a comprehensive, adaptive, and user-centric approach to power management in wearable devices. The system can quickly personalize its behavior, operate efficiently within the constraints of wearable hardware, and continuously balance power savings with user satisfaction. This holistic approach underlies SmartAPM’s significant improvements in battery life and user satisfaction, positioning it as a promising solution for the ongoing challenge of power management in wearable technology.

link